영상처리 Chap07_영상 특징과 서술자 추출

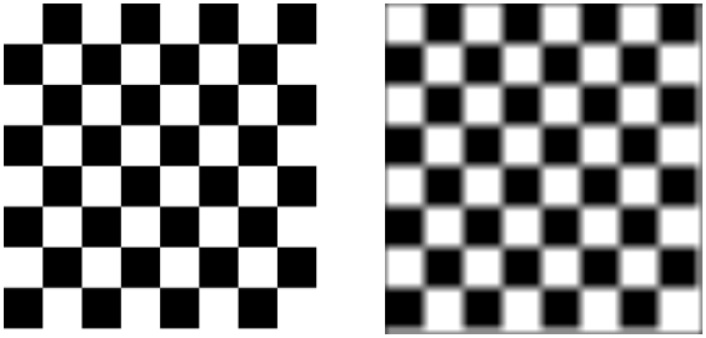

- Scipy 라이브러리의 signal 모듈을 이용하여 컨볼루션

- Scipi API : https://docs.scipy.org/doc/scipy/reference/index.html

- signal.convolve2d() API : https://docs.scipy.org/doc/scipy/reference/generated/scipy.signal.convolve2d.html

- signal.convolve2d() 함수 이용하여 컨볼루션

12345678910111213141516171819202122232425from PIL import Imageimport matplotlib.pyplot as pltimport numpy as npfrom scipy import signal#filter = [[1/9., 1/9., 1/9.], [1/9., 1/9., 1/9.], [1/9., 1/9., 1/9.]] #스무딩filter = np.ones((11,11)) / 121#filter = [[0., -1., 0.],[-1., 4., -1.],[0., -1., 0.]] # 라플라스#filter = [[-1., 0., 1.],[-1., 0., 1.],[-1., 0., 1.]] # Prewitt 1#filter = [[1., 1., 1.],[0., 0., 0.],[-1., -1., -1.]] # Prewitt 2#filter = [[-1., 0., 1.],[-2., 0., 2.],[-1., 0., 1.]] # Sobel 1#filter2 = [[1., 2., 1.],[0., 0., 0.],[-1., -2., -1.]] # Sobel 2#filter = [[0., 1.],[-1., 0.]] # Robers 1#filter = [[1., 0.],[0., -1.]] # Robers 2im = Image.open('./images/chess.png')#im = Image.open('./images/chess_football.png')im = im.convert('L')im = np.array(im)im2 = signal.convolve2d(im, filter, mode='same')plt.figure(figsize=(10,5))plt.subplot(1,2,1); plt.axis('off'); plt.imshow(im, cmap='gray')plt.subplot(1,2,2); plt.axis('off'); plt.imshow(im2, cmap='gray')

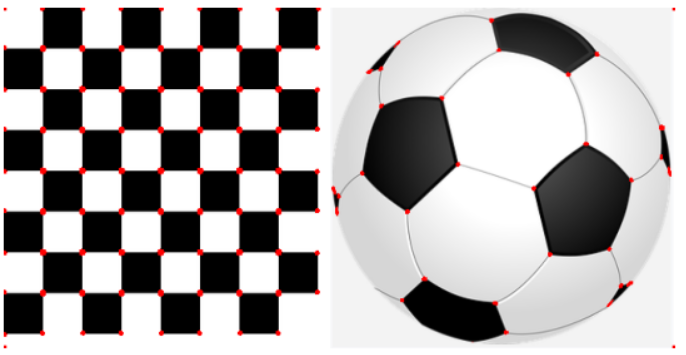

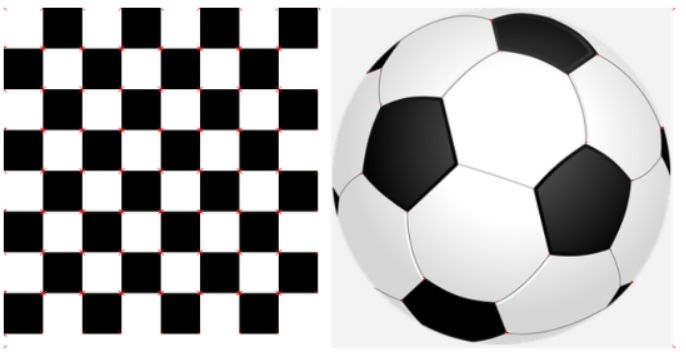

- skimage의 corner_harris() 함수를 사용하여 feature 찾기

12345678910111213141516171819from PIL import Imageimport matplotlib.pyplot as pltimport numpy as npfrom skimage.feature import corner_harris#im = Image.open('./images/chess.png')im = Image.open('./images/chess_football.png')im2 = im.copy()im2 = im2.convert('L')im2 = np.array(im2)coordinates = corner_harris(im2, k=0.001) # k=0.2im = np.array(im)im[coordinates>0.01*coordinates.max()]=[255,0,0,255]plt.figure(figsize=(10,5))plt.axis('off')plt.imshow(im, cmap='gray')

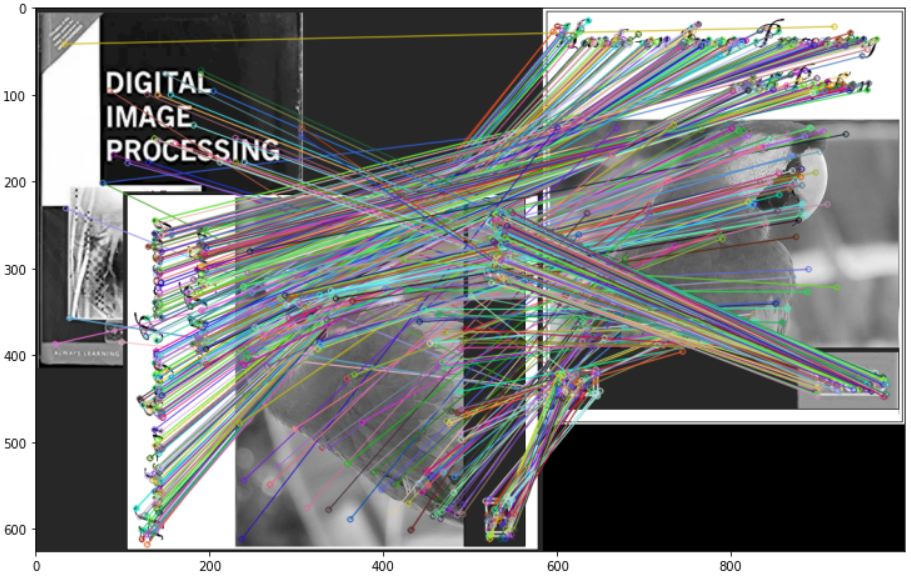

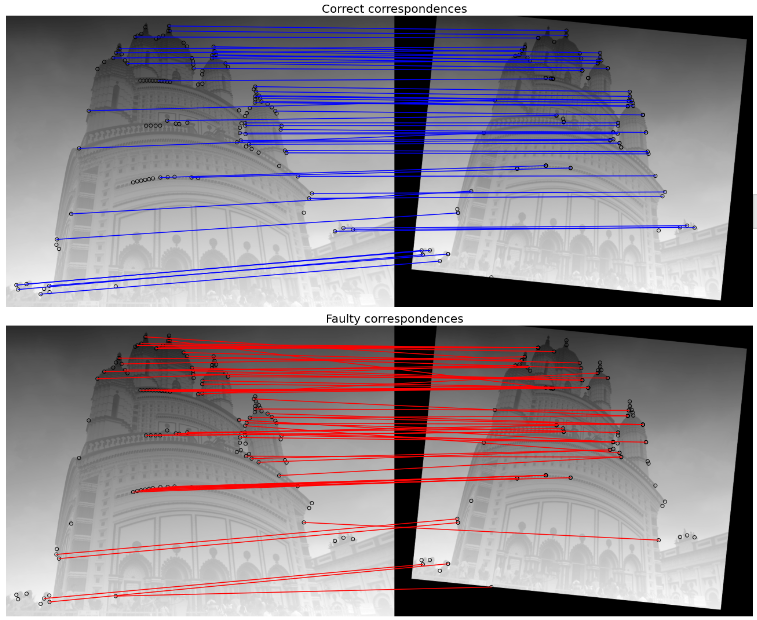

- Ransac 알고리즘을 사용한 영상 매칭

- 영상1을 불러와서 affine변환한 영상2 생성후 두 영상에서 harris corner특징 찾기

1234567891011121314151617181920212223242526272829303132333435363738394041424344454647484950from PIL import Imageimport matplotlib.pyplot as pltimport numpy as npfrom skimage.io import imreadfrom skimage.util import img_as_floatfrom skimage.color import rgb2grayfrom skimage.feature import corner_harris, corner_peaks, corner_subpixfrom skimage.transform import AffineTransform, warpfrom skimage.exposure import rescale_intensityfrom skimage.measure import ransactemple = rgb2gray(img_as_float(imread('./images/temple.JPG')))image_original = np.zeros(list(temple.shape) + [3])image_original[..., 0] = templemgrid = np.mgrid[0:image_original.shape[0], 0:image_original.shape[1]]gradient_row, gradient_col = (mgrid / float(image_original.shape[0]))image_original[..., 1] = gradient_rowimage_original[..., 2] = gradient_colimage_original = rescale_intensity(image_original) # 밝기 조정# 와핑 영상 생성- 크기 변경, 회전, 평행 이동affine_trans = AffineTransform(scale=(0.8, 0.9), rotation=0.1, translation=(120, -20))image_warped = warp(image_original, affine_trans .inverse, output_shape=image_original.shape)image_original_gray = rgb2gray(image_original) # 명암도 영상image_warped_gray = rgb2gray(image_warped)# 해리스 코너 측을 사용하여 코너 추출coordinates = corner_harris(image_original_gray)coordinates[coordinates > 0.01*coordinates.max()] = 1coordinates_original = corner_peaks(coordinates, threshold_rel=0.0001, min_distance=5)coordinates = corner_harris(image_warped_gray)coordinates[coordinates > 0.01*coordinates.max()] = 1coordinates_warped = corner_peaks(coordinates, threshold_rel=0.0001, min_distance=5)# 서브 화소 코너 위치 결정coordinates_original_subpix = corner_subpix(image_original_gray, coordinates_original, window_size=9)coordinates_warped_subpix = corner_subpix(image_warped_gray, coordinates_warped, window_size=9)coordinates[coordinates < 0] = 0# 결과 확인용 출력print(temple.shape, image_original.shape, mgrid.shape)#print(temple)#print(image_original[..., 0])#print(coordinates.shape, coordinates_original.shape)print(coordinates_original[:5])print(coordinates_original_subpix[:5])#np.savetxt('./a.txt', coordinates, fmt='%.1f') - Ransac 알고리즘 함수 구현

123456789101112131415161718192021222324252627282930# 중심 화소까지의 거리에 따라 화소 가중치 계산def gaussian_weights(window_ext, sigma=1):y, x = np.mgrid[-window_ext:window_ext+1, -window_ext:window_ext+1]g_w = np.zeros(y.shape, dtype = np.double)g_w[:] = np.exp(-0.5 * (x**2 / sigma**2 + y**2 / sigma**2))g_w /= 2 * np.pi * sigma * sigmareturn g_wdef match_corner(coordinates, window_ext=3):row, col = np.round(coordinates).astype(np.intp)window_original = image_original[row-window_ext:row+window_ext+1, \col-window_ext:col+window_ext+1, :]# 중심 화소까지의 거리에 따른 화소의 가중치weights = gaussian_weights(window_ext, 3)weights = np.dstack((weights, weights, weights))#왜곡 영상에서 모든 코너에 대한 차 제곱의 합 계산SSDs = []for row, col in coordinates_warped:window_warped = image_warped[row-window_ext:row+window_ext+1, \col-window_ext:col+window_ext+1, :]if window_original.shape == window_warped.shape:SSD = np.sum(weights * (window_original - window_warped)**2)SSDs.append(SSD)# 일치관계로서 최소 SSD로 코너 사용min_idx = np.argmin(SSDs) if len(SSDs) > 0 else -1if min_idx >= 0: return coordinates_warped_subpix[min_idx]else: return [None] - 일치관계 찾기

1234567891011121314151617181920# 제곱 차 가중 합계를 사용하여 일치 관계 찾기source, destination = [], []for coordinates in coordinates_original_subpix:coordinates1 = match_corner(coordinates)if any(coordinates1) and len(coordinates1) > 0 \and not all(np.isnan(coordinates1)):source.append(coordinates)destination.append(coordinates1)source = np.array(source)destination = np.array(destination)# 모든 좌표를 이용하여 어파인 변환 모델 추정model = AffineTransform()model.estimate(source, destination)# 란삭 어파인 변환 모델 강력 추정model_robust, inliers = ransac((source, destination), AffineTransform, \min_samples=3, residual_threshold=2, max_trials=100)outliers = inliers == False - 결과 출력

123456789101112131415from skimage.feature import plot_matchesfig, axes = pylab.subplots(nrows=2, ncols=1, figsize=(20,15))inlier_idxs = np.nonzero(inliers)[0]outlier_idxs = np.nonzero(outliers)[0]pylab.gray()plot_matches(axes[0], image_original_gray, image_warped_gray, source, \destination, np.column_stack((inlier_idxs, inlier_idxs)), matches_color='b')axes[0].axis('off'), axes[0].set_title('Correct correspondences', size=20)plot_matches(axes[1], image_original_gray, image_warped_gray, source, \destination, np.column_stack((outlier_idxs, outlier_idxs)), matches_color='r')axes[1].axis('off'), axes[1].set_title('Faulty correspondences', size=20)fig.tight_layout()pylab.show()

- 영상1을 불러와서 affine변환한 영상2 생성후 두 영상에서 harris corner특징 찾기

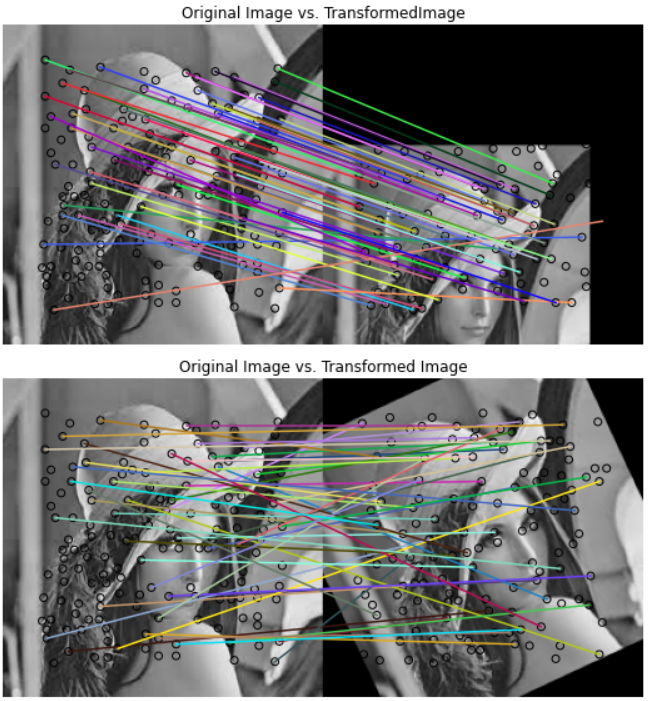

- BRIEF 알고리즘을 사용한 영상 매칭

12345678910111213141516171819202122232425262728293031323334353637383940414243444546474849505152535455from skimage.io import imreadfrom skimage import transform as transformfrom skimage.color import rgb2grayimport matplotlib.pyplot as pltfrom skimage.feature import (match_descriptors, corner_peaks,corner_harris, plot_matches, BRIEF)image = imread('./images/lena.jpg')print(image.shape)img_gray = rgb2gray(image)#plt.figure(figsize=(15,8))#plt.imshow(img_gray, cmap='gray')#plt.axis("off")#plt.show()affine_trans = transform.AffineTransform(scale=(1.2, 1.2),translation=(0,-100))img2 = transform.warp(img_gray, affine_trans)img3 = transform.rotate(img_gray, 25)coords1, coords2, coords3 = corner_harris(img_gray), corner_harris(img2), corner_harris(img3)coords1[coords1 > 0.01*coords1.max()] = 1coords2[coords2 > 0.01*coords2.max()] = 1coords3[coords3 > 0.01*coords3.max()] = 1keypoints1 = corner_peaks(coords1, min_distance=5)keypoints2 = corner_peaks(coords2, min_distance=5)keypoints3 = corner_peaks(coords3, min_distance=5)extractor = BRIEF()extractor.extract(img_gray, keypoints1)keypoints1, descriptors1 = keypoints1[extractor.mask],extractor.descriptorsextractor.extract(img2, keypoints2)keypoints2, descriptors2 = keypoints2[extractor.mask],extractor.descriptorsextractor.extract(img3, keypoints3)keypoints3, descriptors3 = keypoints3[extractor.mask],extractor.descriptorsmatches12 = match_descriptors(descriptors1, descriptors2, cross_check=True)matches13 = match_descriptors(descriptors1, descriptors3, cross_check=True)fig, axes = plt.subplots(nrows=2, ncols=1, figsize=(15,8))plt.gray()plot_matches(axes[0], img_gray, img2, keypoints1, keypoints2,matches12)axes[0].axis('off')axes[0].set_title("Original Image vs. TransformedImage")plot_matches(axes[1], img_gray, img3, keypoints1, keypoints3, matches13)axes[1].axis('off')axes[1].set_title("Original Image vs. Transformed Image")plt.tight_layout()plt.show()

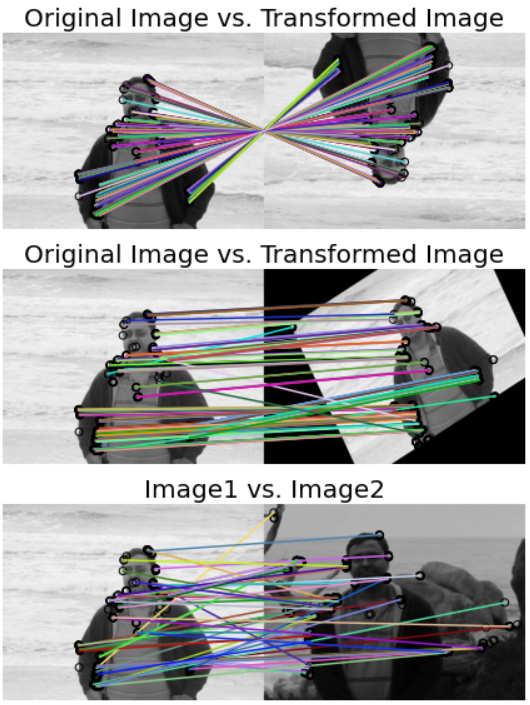

- ORB 알고리즘을 사용한 영상 매칭

12345678910111213141516171819202122232425262728293031323334353637383940414243444546from skimage.io import imreadfrom skimage.color import rgb2grayfrom skimage import transform as transformfrom skimage.feature import (match_descriptors, ORB, plot_matches)from matplotlib import pylabimg1 = rgb2gray(imread('./images/me5.jpg'))img2 = transform.rotate(img1, 180)affine_trans = transform.AffineTransform(scale=(1.3, 1.1), rotation=0.5,translation=(0, -200))img3 = transform.warp(img1, affine_trans)img4 = transform.resize(rgb2gray(imread('./images/me6.jpg')), img1.shape,anti_aliasing=True)descriptor_extractor = ORB(n_keypoints=200)descriptor_extractor.detect_and_extract(img1)keypoints1, descriptors1 = descriptor_extractor.keypoints,descriptor_extractor.descriptorsdescriptor_extractor.detect_and_extract(img2)keypoints2, descriptors2 = descriptor_extractor.keypoints,descriptor_extractor.descriptorsdescriptor_extractor.detect_and_extract(img3)keypoints3, descriptors3 = descriptor_extractor.keypoints,descriptor_extractor.descriptorsdescriptor_extractor.detect_and_extract(img4)keypoints4, descriptors4 = descriptor_extractor.keypoints,descriptor_extractor.descriptorsmatches12 = match_descriptors(descriptors1, descriptors2, cross_check=True)matches13 = match_descriptors(descriptors1, descriptors3, cross_check=True)matches14 = match_descriptors(descriptors1, descriptors4, cross_check=True)fig, axes = pylab.subplots(nrows=3, ncols=1, figsize=(15,8))pylab.gray()plot_matches(axes[0], img1, img2, keypoints1, keypoints2, matches12)axes[0].axis('off')axes[0].set_title("Original Image vs. Transformed Image", size=20)plot_matches(axes[1], img1, img3, keypoints1, keypoints3, matches13)axes[1].axis('off')axes[1].set_title("Original Image vs. Transformed Image", size=20)plot_matches(axes[2], img1, img4, keypoints1, keypoints4, matches14)axes[2].axis('off')axes[2].set_title("Image1 vs. Image2", size=20)pylab.tight_layout()pylab.show()

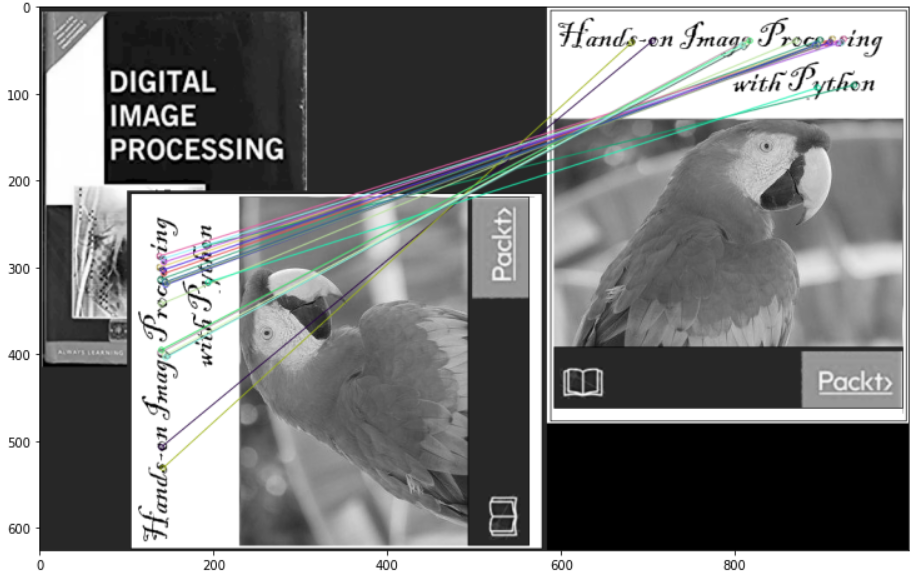

- OpenCV 라이브러리의 ORB 알고리즘을 사용한 영상 매칭

123456789101112131415161718192021import cv2from matplotlib import pylabimg1 = cv2.imread('./images/books.png',0)img2 = cv2.imread('./images/book.png',0)orb = cv2.ORB_create()kp1, des1 = orb.detectAndCompute(img1,None)kp2, des2 = orb.detectAndCompute(img2,None)bf = cv2.BFMatcher(cv2.NORM_HAMMING, crossCheck=True)matches = bf.match(des1, des2)matches = sorted(matches, key = lambda x:x.distance)img3 = cv2.drawMatches(img1,kp1,img2,kp2,matches[:20], None, flags=2)pylab.figure(figsize=(15,8))pylab.imshow(img3)pylab.show()

- OpenCV 라이브러리의 SIFT 알고리즘을 사용한 영상 매칭

123456789101112131415161718192021import cv2from matplotlib import pylabimg1 = cv2.imread('./images/books.png',0)img2 = cv2.imread('./images/book.png',0)sift = cv2.xfeatures2d.SIFT_create()kp1, des1 = sift.detectAndCompute(img1, None)kp2, des2 = sift.detectAndCompute(img2, None)bf = cv2.BFMatcher()matches = bf.knnMatch(des1, des2, k=2)good_matches = []pylab.figure(figsize=(15,8))for m1, m2 in matches:if m1.distance < 0.75*m2.distance:good_matches.append([m1])img3 = cv2.drawMatchesKnn(img1, kp1, img2, kp2,good_matches, None, flags=2)pylab.imshow(img3)pylab.show()